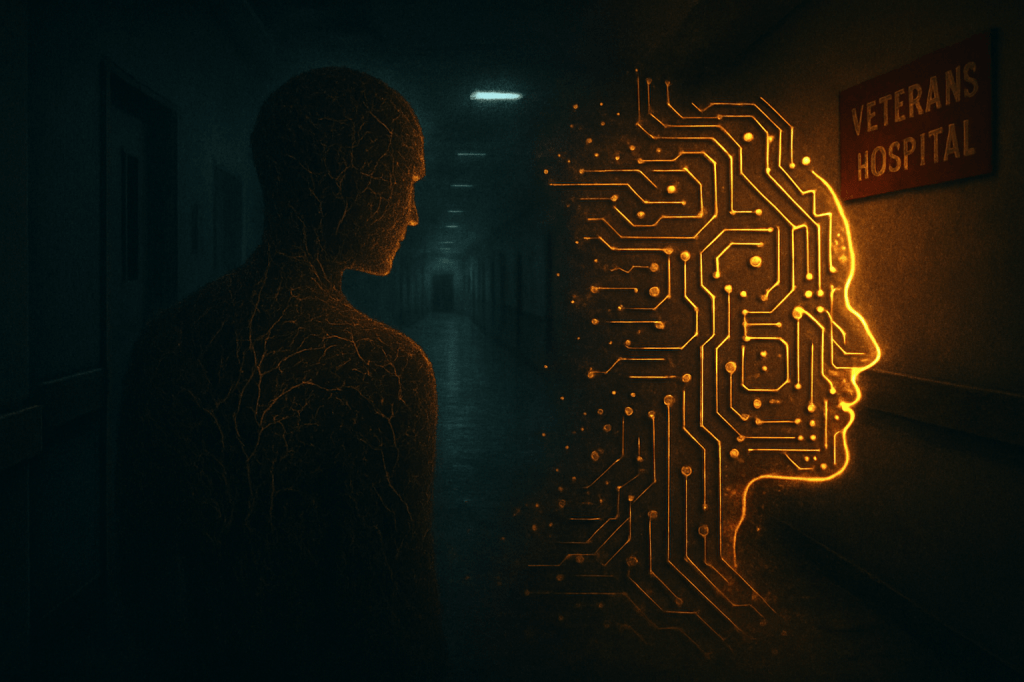

Subtracting Expertise: The VA’s Cuts and the Unasked Questions for an AI-Driven Future

The Department of Veterans Affairs announced a significant workforce reduction yesterday, a decision that, while framed by budgetary realities and attrition, casts a long shadow over the future of specialized human roles in critical sectors. By September 2025, the VA aims to cut approximately 30,000 positions, building on the 17,000 already lost this year. Notably, this includes the earlier dismissal of over 1,000 probationary employees, many of them researchers dedicated to mental health, cancer treatments, addiction recovery, prosthetics, and burn pit exposure. The official line points to strategic planning and minimizing disruption, but the implications run deeper for anyone observing the accelerating shifts in our professional landscape.

The Unseen Hand: Vulnerability in Specialized Roles

This isn’t a story explicitly about AI replacing human workers. The VA cites budget constraints and attrition. Yet, the very nature of the roles being shed — highly specialized, deeply human-centric, and often requiring years of dedicated research and patient interaction — highlights a profound vulnerability. In a world increasingly looking to algorithmic solutions for efficiency and scale, any significant reduction in human capacity, even for financial reasons, begs a critical question: What are we implicitly betting on to fill the eventual void?

These cuts occur in areas where AI holds immense promise for augmentation, from diagnostic support in cancer to personalized mental health interventions. But promise isn’t immediate capability. To diminish human expertise in these precise domains, even as the potential for AI assistance grows, creates a precarious dynamic. Is this a calculated pre-emptive move, assuming future AI will compensate, or a short-sighted divestment in the very human ingenuity needed to ethically and effectively integrate AI into complex care systems?

A Paradox of Progress: Human Capacity vs. Algorithmic Aspiration

The irony is stark. As breakthroughs in AI continue to redefine what’s possible in healthcare research and delivery, the human infrastructure that traditionally drives these fields is being scaled back. Lawmakers like Senator Patty Murray and Representative Debbie Wasserman Schultz have voiced immediate concerns about potential staffing shortages and the direct impact on veteran care. This immediate, tangible concern underscores a larger, more abstract dilemma:

- The Human-AI Gap: Who guides the ethical development and deployment of AI in critical areas like mental health or prosthetics if the human experts in those fields are being systematically reduced?

- Loss of Nuance: Can AI truly replicate the nuanced understanding, empathy, and hands-on care provided by experienced human professionals, especially in sensitive areas like addiction recovery or trauma support?

- Premature Optimization? Is the public sector, under fiscal pressure, making decisions that anticipate an AI-driven future before the technology is fully mature or widely integrated for such complex tasks?

- The Hidden Cost of “Efficiency”: While attrition might sound benign, the cumulative loss of institutional knowledge and specialized skill sets can have profound, long-term consequences that outweigh immediate budgetary savings.

This VA decision serves as a stark reminder that even when AI isn’t the direct cause of workforce reduction, its pervasive influence on economic thinking and future planning means every shift in human employment must now be viewed through an AI-tinted lens. It forces us to ask not just “who got replaced?” but “what capacity are we losing, and what are we implicitly trusting technology to provide in its place?” The answer, in this case, remains unsettlingly unclear.