When the Safety Lights Blinked Red Over Your Desk

Yesterday’s most important story about AI and work didn’t come from a lab blog or a startup CEO’s thread. It came from a newspaper masthead. The Guardian’s editorial board looked at a week of safety researchers walking out of leading AI labs and didn’t file it under “industry gossip.” They treated it as an early-warning light for the rest of us, a signal that the business model now steering the most influential systems is no longer aligned with how people need to make decisions at work. Not in the abstract—on the screens we already use to hire, evaluate, buy, and serve.

What made the piece land is what it refused to sensationalize. There’s no mysticism about sentience, no apocalypse countdown. The board made a more immediate claim: if the people hired to say “not yet” are leaving, it’s because “right now” is being optimized for revenue. And when revenue starts dictating interface design, the AI that sits between you and your judgment becomes less of a colleague and more of a channel.

The pivot you can feel in your workflow

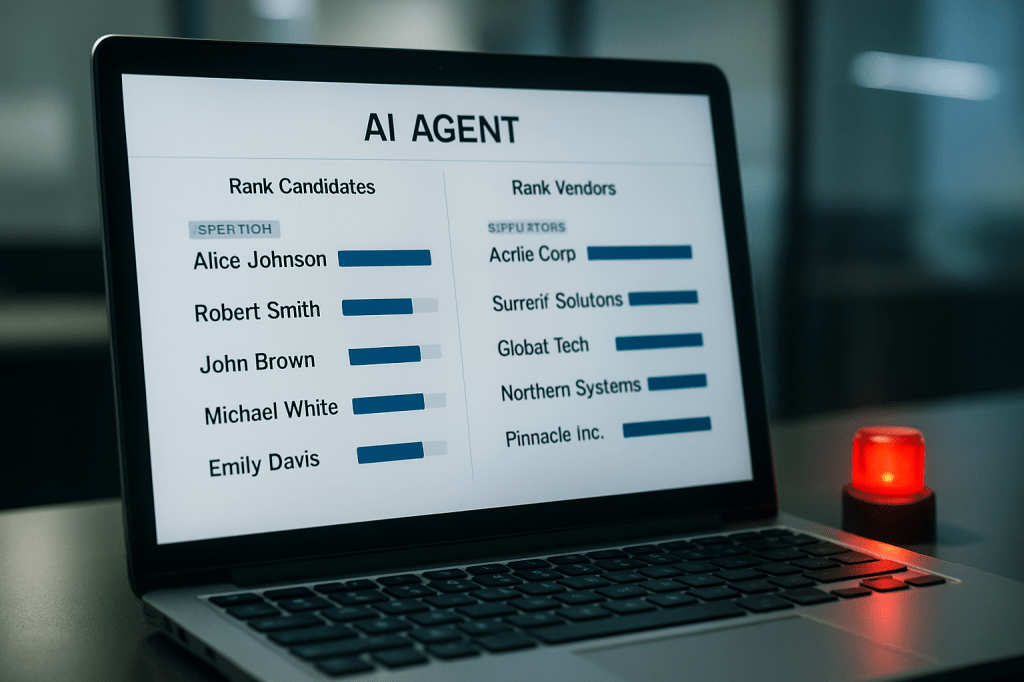

Switch on any of the new agent interfaces and you can see where this is heading. They’re deliberately framed as a primary way to get work done—compose the email, rank the candidates, summarize the brief, propose the vendor. That centrality is commercially irresistible. If the agent becomes the front door, then the doorframe can carry ads, sponsorships, and “suggested” actions. Put that inside a sales team’s forecast meeting or an HR panel, and the line between “assistive prompt” and “paid nudge” isn’t a line anymore; it’s a fog.

The editorial draws a straight line from leadership pedigrees built in engagement-maximization to the product choices workers will live with. The risk isn’t just distraction. It’s subtle manipulation embedded in the act of doing your job. A procurement agent that floats “partner offers” at the top of your shortlist; a performance-review assistant that shapes narrative templates in ways that compress nuance; a triage model that silently learns shortcuts from last quarter’s mislabeled incidents and bakes those errors into this quarter’s escalations. The result is not cinematic catastrophe. It’s something grimmer for day-to-day life: low-quality automation that passes as efficiency because it is fast, cheap, and everywhere.

That’s the other term the board invoked: the slow rot that sets in when short-term monetization wins each product trade-off. You feel it first as friction. Then as distrust. Eventually as resignation: we use the tools we have, even as they get worse, because they are integrated with everything else. Now port that cycle into public services and corporate operations at scale. You don’t just degrade a feed; you degrade decisions.

Safety leaves the room, high-stakes walk in

The departures matter because of what follows. Safety teams are the ones who ask to delay a launch, widen an evaluation, harden a fallback. When they exit during a growth sprint, the tendency isn’t toward prudence. It’s toward deployment into precisely the domains where a cheap error becomes an expensive mess—hiring, eligibility, customer remediation, supplier vetting. And those errors are not just wrong answers. They are cascades: a flawed ranking knocks out a candidate who would have corrected a future deficiency; a biased summary tilts an internal narrative that justifies a policy; an unreliable forecast drives a stock move that triggers a hiring freeze that starves the team that could have fixed the model. The loop tightens because the system’s outputs become the next training set’s inputs.

This is why the editorial’s workplace framing matters. It’s not scolding companies for wanting revenue. It’s saying: when the interface that shapes judgment is governed by the same incentives that built ad engines, expect engagement logic to creep into decisions that should be audited, explainable, and above all, corrigible. If you think you can firewall your enterprise use from those incentives by paying for “pro” tiers, history says otherwise. Paywalls change the UX; they don’t detoxify the culture that ships it.

A blueprint exists, and the clock is the real adversary

There is, mercifully, a technical antidote on the table. The International AI Safety Report 2026 isn’t a vibes document. It reads like an operations manual for risk: concrete model evaluations that measure dangerous capabilities; thresholds that trigger containment; “if-then” safety commitments that force pre-commitment before the next capability jump. If you’ve ever written a runbook for a production incident, it feels familiar: decide in the calm what you’ll do in the storm.

The Guardian’s point is straightforward: make this enforceable before the market makes it irrelevant. Voluntary pledges evaporate the second they conflict with quarterly targets. And once a handful of companies become the indispensable substrate for how public agencies, hospitals, and enterprises operate, they graduate into a category we know too well—systems that are too central to fail cleanly and too complex to fix quickly. The editorial calls out the U.S. and U.K. for withholding support, not as a diplomatic jab, but as a practical diagnosis: if the largest markets won’t anchor the blueprint in law, the default regulator will be revenue.

The quiet transfer of power on your team

Strip away the geopolitics and you’re left with a very local redistribution of authority. When a vendor’s agent becomes the way your team initiates work, the vendor owns the first draft of your reasoning. They set what is easy to ask and what is awkward. They define when an answer is “good enough.” They determine which uncertainties are hidden under breezy prose and which are escalated. Over time, that shapes not just outputs but norms: managers who trust the ranking because it’s always there; analysts who stop pushing for raw data because the summary is “close enough”; staff who learn that challenging the agent means challenging the boss who signed the enterprise contract.

Seen this way, yesterday’s editorial wasn’t a sermon. It was a workplace memo written at national scale: your organization is about to rent judgment from companies under pressure to sell influence. If you don’t anchor how that judgment is evaluated, governed, and limited, the product roadmap will do it for you.

What a sane response looks like, starting now

You don’t need to wait for Parliament or Congress to move. The tools in the international report can be internal policy tomorrow. Tie adoption of any agentic system to specific capability evaluations, and define what happens if performance crosses certain risk thresholds—throttling, human-in-the-loop escalation, or deactivation. Make your own “if-then” commitments public inside the company and binding in vendor contracts. Require a clean separation between assistance and commerce: no sponsored slots, no “preferred partners” without explicit labeling, no incentives paid on engagement metrics for tools that influence personnel, finance, or safety decisions. And pre-commit to logging and sharing post-incident analyses with the teams affected, not just the lawyers.

Workers can do something equally powerful: document the moments when the agent subtly sets the frame. Capture the near-misses and the weird nudges. Treat them as system behavior, not personal quirks. Patterns are leverage in policy debates, and in procurement renewals. If the agent is good, this record will validate it. If it’s not, you’ll have more than vibes to counter a glossy slide about productivity.

None of this is anti-innovation. It’s the opposite. Good tools survive scrutiny. Great ones invite it, because the feedback makes them indispensable. The editorial’s uncomfortable claim is that the current incentive stack doesn’t reward that kind of durability. It rewards growth first, explanations later. If you lead a team, your job is to invert that sequence where it touches your decisions. If you legislate, your job is to make that inversion the norm, not the outlier.

The exits from safety teams were the week’s visible event. The larger story is invisible until it isn’t: we are standardizing on intermediaries for judgment. Without guardrails rooted in evaluation, thresholds, and pre-commitment, those intermediaries will be optimized to harvest attention in the very places that most need reflection. Yesterday, a newspaper named the risk. Today, it’s back on your desk, wearing a friendly prompt.