Altman Calls Out AI‑Washing—And Starts the Clock on Real Displacement

It took a hotel hallway in New Delhi to sharpen the jobs debate. Between panels at the India AI Impact Summit, Sam Altman was asked the question that has followed every reorg and earnings call this winter: are “AI layoffs” real, or just a convenient label? His answer was disarmingly plain. Some firms, he said, are engaging in “AI washing”—blaming cuts on artificial intelligence that they would have made anyway. And yet, he added, the genuine effects of automation “will begin to be palpable” in the next few years. It was a split-screen message: skepticism for today’s headlines, urgency for tomorrow’s labor market.

The New Delhi Moment

The setting mattered. This was not a choreographed keynote, but an on-the-fly exchange recorded by CNBC‑TV18 and echoed by outlets that quickly led with the phrase. Stripped of the usual varnish, it sounded less like a talking point and more like a boundary line being drawn. On one side: layoffs wrapped in futuristic language to sanitize old-fashioned cost discipline. On the other: a forecast that the true automation shift is still loading, and when it arrives, it won’t need euphemism to be felt.

That distinction lands because the incentives to mislabel are obvious. If a leadership team attributes reductions to AI, they can present themselves as both ruthless and visionary: cutting today while signaling alignment with the next platform. It can deflect blame from strategy errors, soothe investors with a modern story, and telegraph to remaining employees that the firm is leaning into tools competitors might fear. Narrative arbitrage has never been more tempting.

The Numbers That Cut Through the Theater

There is data to cool the hype. Challenger, Gray & Christmas tallied 108,435 announced U.S. layoffs in January 2026. Of those, only 7% explicitly cited AI. Across 2025, the share was 4.5%. No one should pretend attribution is clean—AI can be the invisible hand behind “restructuring,” or the accelerant that makes an underperforming unit suddenly indefensible. But when the explicit numbers remain small, it supports Altman’s claim: a chunk of what’s being branded as AI-driven today is likely just the same corporate playbook with a new dust jacket.

Precision matters because statistics like these are upstream of real-world decisions. If policymakers overread an AI layoff wave that isn’t yet here, they risk blunt interventions—taxes, moratoria, compliance hurdles—that miss the mechanism of displacement and slow down productivity gains. If workers and schools underread it, they may slip into an optimism trap, postponing the reskilling that will be expensive, time-consuming, and, for many roles, nonoptional.

Why the Double Message Is Strategic

Altman’s calibration is not just philosophical; it’s operational. OpenAI and its peers are in the middle of transforming copilots into agents—systems that not only draft but also decide, transact, and integrate across back-office systems. That shift is where headcount pressure grows legs, because tasks that were once guarded by human-in-the-loop checkpoints become end-to-end automations. Saying “not yet” on layoffs buys social trust; saying “soon” preserves urgency and pushes companies and governments to build absorptive capacity before the curve steepens.

There’s also reputational risk management at work. If the public concludes that “AI layoffs” are mostly cover stories, AI leaders will be accused of enabling corporate theater. If, later, the real displacement wave hits without preparation, those same leaders will wear the blame for minimizing it. The only durable posture is to separate the signal from the noise now, and simultaneously insist that the signal will get louder.

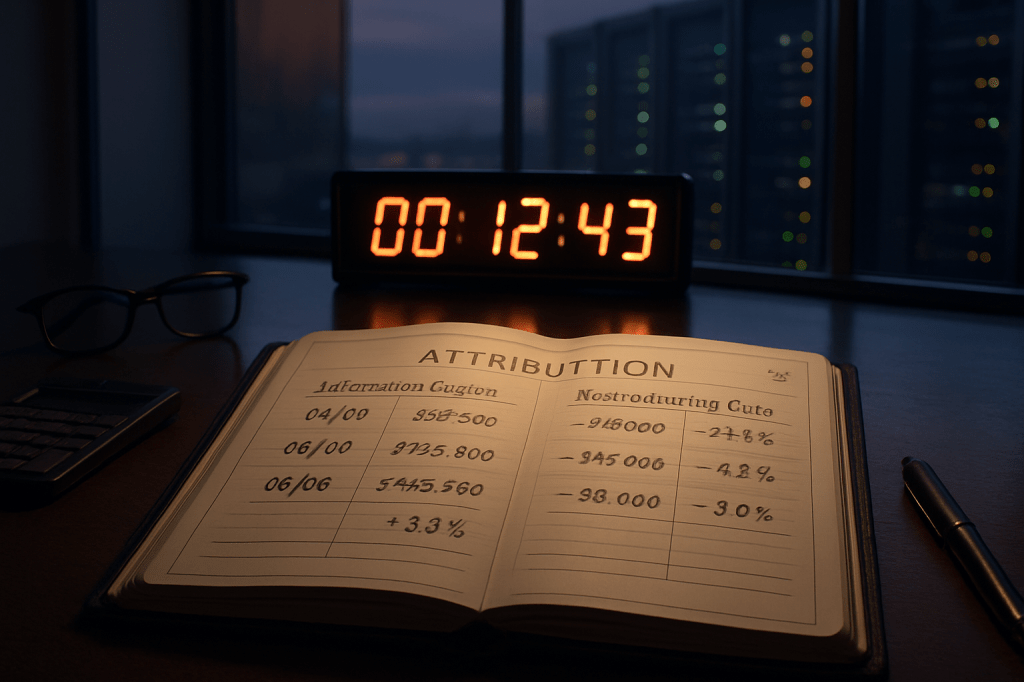

What Honest Attribution Would Look Like

The fix is not complicated, but it is inconvenient. Companies should disclose layoff rationales with specificity that survives an audit. If AI is the driver, show your work: identify the processes automated, the software deployed, and the throughput gains realized. If the cause is macro headwinds, strategy pivots, or investor pressure, say that—without reaching for a buzzword to tidy the narrative. Internally, CFOs and CHROs can maintain an attribution ledger that distinguishes automation savings from budget cuts, and tie those entries to unit-level productivity data. This is not just governance; it’s a forward indicator. If the ledger shows automation-led output climbing without commensurate hiring, the “palpable” phase Altman referenced is nearing.

On the other side of the ledger, under-attribution is just as corrosive. Some teams are quietly using AI to collapse cycle times—customer support, quality assurance, content ops—without advertising it, either to avoid scrutiny or to protect morale. That stealth can delay reskilling budgets and leave affected workers with less time to pivot. Transparency is a two-edged responsibility.

Reading the Next Three Years

Expect displacement to arrive first as role hollowing rather than mass elimination. Titles remain, but the job’s center of gravity shifts to oversight, exception handling, and orchestration across tools. The second phase comes when organizations re-architect around what the tools do natively—fewer handoffs, fewer approvals, tighter loops from demand signal to fulfillment. That is when headcount plans move from attrition-only to structural reductions. None of this requires sci‑fi leaps; it requires managers who trust the systems enough to redesign work around them.

For workers living at that nexus, clarity beats vibes. If your company is waving AI as the reason for cuts without a concrete process map, push for one. If your leadership insists AI isn’t relevant to your function, assume it will be and invest in the interfaces—prompting, retrieval design, evaluation, agent policy, data hygiene—that turn general models into domain leverage. The difference between being automated and being the person who directs automation is often a quarter’s worth of focused skill-building, undertaken before incentives make the choice for you.

The Policy Translation

Governments should treat Altman’s remark as a call for attribution standards, not for interventionist theatrics. Require clear causality in public layoff disclosures. Fund transition programs that start before displacement peaks, targeted at tasks with demonstrable near-term automation exposure. Encourage measurement: shared taxonomies for what “AI-caused” means, common evaluation suites for job-relevant model performance, and incentives for firms that publish impact audits alongside their adoption roadmaps. The goal is to replace today’s rhetorical fog with time-stamped, comparable evidence.

The irony is that getting the narrative right won’t slow AI’s march into workflows. It will, however, make the social contract legible enough to survive it. In New Delhi, Altman named the promotional sleight of hand while pointing to a very real horizon. Believe him on both counts, and plan accordingly.