Two Tenths That Changed the Conversation

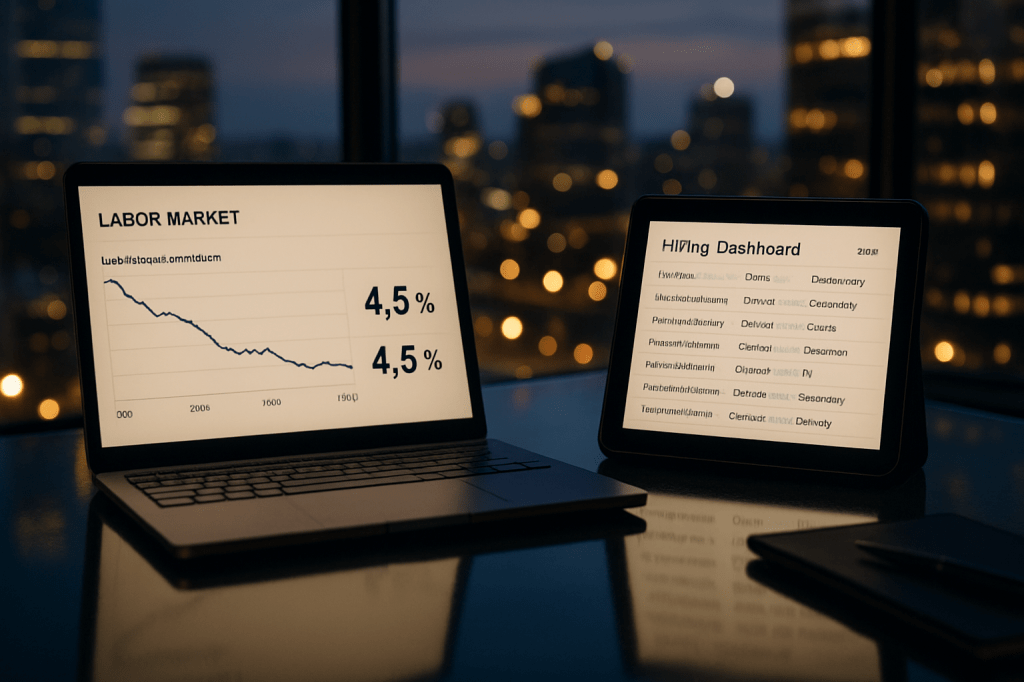

It wasn’t a screaming headline, more a quiet nudge: a Goldman Sachs Research note, led by economist Pierfrancesco Mei, said the part of unemployment linked to AI is now visible in the data—and likely to push the U.S. jobless rate a bit higher by year’s end. From roughly 4.3% today to near 4.5%, with around two-tenths of that drift reflecting substitution of software for human tasks. Markets didn’t need another think piece; they needed a number. They got one.

The power of that estimate isn’t its size—two-tenths won’t jolt the business cycle—but its meaning. This is a shift from theoretical displacement to measured displacement. Goldman isn’t calling a mass layoff shock. It’s describing a diffusion pattern: automation stepping into well-defined, repetitive tasks in the corners of the economy most ready for it, turning some hiring pipelines into non-replacements and some net job growth into net shrinkage. The bank says the aggregate effect is still moderate. But it’s in the spreadsheets now.

When “Slight” Becomes Signal

Two-tenths of a percentage point can feel abstract. Translate it to people and you get a rough scale: with a labor force on the order of 170 million, that’s about 340,000 additional unemployed compared with a counterfactual where AI wasn’t pulling its weight in cost-cutting. That won’t define the macro year, yet it will define the experience of specific occupations and local economies where exposure is concentrated.

Goldman points to a slowdown in job growth overall and outright declines inside sub-industries most ready for AI rollouts. That’s compatible with what many operations leaders quietly describe: fewer backfills for support roles, more pilot projects graduating into production for document processing, reconciliation, content operations, and first-draft code generation. The visible layoff is just one route. Another is the vacancy that never gets posted because a workflow, once split among three people, is now handled by one analyst and a model. The unemployment rate doesn’t care which route delivered the separation; it counts the end state.

The Clock Speed of Adoption

Goldman characterizes the impact as incremental, with risks tilted toward “slightly larger” if adoption steps up faster than anticipated. That asymmetry matters. AI deployment rarely advances in a straight line. It clusters around budget cycles, vendor maturity, and legal green lights. If CFOs, emboldened by better accuracy and tighter governance, pull forward deployments in the second half, the labor effect can arrive as a series of abrupt plateaus rather than a smooth slope. Pair that with the bank’s same‑day macro view—growth near 2.5% Q4/Q4, but an equity correction flagged as a key risk—and you can sketch the loop: a market wobble prompts management to accelerate efficiency moves they were already piloting, amplifying the AI substitution impulse just as demand wavers.

Continuity, Not a Reversal

None of this departs from Goldman’s longer-run framework. Their prior work maps the occupations most at risk—programming-adjacent tasks, portions of accounting, and various support roles—and argues the unemployment bump should be modest and temporary, on the order of half a percentage point, before productivity gains and new roles reabsorb displaced workers. Yesterday’s call is a near-term calibration: not a new thesis, but the first mile marker where the data catches up to the model.

Whether “temporary” holds depends on the plumbing. Productivity has to translate into lower effective prices or new product features, raising demand enough to hire into different skill mixes. Training and mobility have to keep pace so that a back-office specialist can credibly pivot into model supervision, data quality, workflow design, or client-facing roles. Policy choices—from credential flexibility to immigration for technical talent—will determine how quickly frictions give way to absorption.

Where to Look as 2026 Unfolds

If you want to see the mechanism rather than its aggregate shadow, watch the micro. Payroll lines in AI-exposed sub-industries will tell you whether negative growth is deepening or stabilizing. Job postings for routine support work will reveal whether firms are quietly retiring requisitions. Earnings calls will surface a new dialect of efficiency—references to “automation leverage” and “headcount optimization through tooling” in segments that once scaled by adding people. Wage drift for entry-level analysts and junior developers will betray the bargaining power shift. And in the claims data, sectoral skews will hint at where retraining needs to run hottest.

The Distribution Problem

Even if the nationwide move is small, the distribution won’t be. Two-tenths on average can feel like two percentage points in a metro anchored by shared-services centers or content operations. The early footprint of generative systems is in tasks with well-defined inputs and outputs; that maps onto specific employers, campuses, and cities. The average is merciful; the variance is not.

The Year the Numbers Arrived

Goldman’s note didn’t settle the debate; it disciplined it. For a year crowded with AI promises, this is the first mainstream forecast to say, with a straight face and a spreadsheet, that unemployment will be higher by December partly because software got better. The bank still expects above-trend growth. It still sees the labor effect as real but not dominant. But the story is no longer hypothetical. The models are running, the headcounts are adjusting, and the labor market is beginning to register AI not as a slogan, but as a small, discernible pressure. In a data-driven economy, that’s how eras start: not with a crash, but with two-tenths that everyone can see.