Jensen Huang’s New Law of Work: Faster Beats Fewer

GTC was supposed to be about silicon. Instead, the loudest sound in the room was a labor forecast delivered from the stage where the GPU era keeps renewing itself. Nvidia’s Jensen Huang did not hedge. “A lot of people are saying AI is coming, we’re going to run out of jobs — but it’s exactly the opposite,” he said, using Nvidia’s own cadence as the exhibit: work cycles compress, deliverables fly back upstream, and managers receive more, not less, to decide and ship. In one stroke he flipped the script that has haunted break rooms and policy hearings for years. If you came for a layoff narrative, you left with a throughput thesis.

The messenger made it consequential

Plenty of founders talk augmentation. What made this different was the venue and the balance sheet behind it. GTC is the gravitational center of the AI buildout, and Nvidia is the rare firm whose choices actually raise or lower the economy’s compute ceiling. When its CEO says the near-term effect of AI is acceleration, boardrooms hear not a pep talk but an operating assumption. The story becomes self-fulfilling: when the platform supplier frames AI as a speed multiplier, the buyers plan for speed. Tone is strategy.

The throughput paradox arrives at work

Huang’s claim rests on a familiar but under-acknowledged dynamic: when you reduce the cost of producing a unit of something—here, drafts, analyses, prototypes—you rarely get less of it. You get a flood. The backlog expands to meet the new capacity; cycle time collapses, expectations rise, and the definition of “done” stretches to include the improvements that used to be out of scope. In that world, headcount doesn’t evaporate overnight. It reorganizes around orchestration, review, escalation, and integration. The danger for workers is not pink slips but pace: your calendar accelerates until the bottleneck moves to you.

The playbook hidden in plain sight

The practical reading of Huang’s comments is a two-step: augment first, then reorganize. First, give every team an agentic assist and watch output spike. Next, redraw roles around the new flow: fewer pure producers, more conductors; fewer meetings about status, more decisions at the edge with automated guardrails; fewer bespoke workflows, more pipelines that assume contributions from software collaborators working at odd hours. If you manage people, your span of control just widened. If you run HR, your competency model tilts toward prompt design, exception handling, and systems thinking. If you own budgets, your new scarce resource isn’t headcount—it’s attention.

The safety clause is a strategy, too

Huang added a constraint that sounded almost obvious—AI shouldn’t break the law or promise functionality it shouldn’t have—but it functions like a design brief for the next hiring wave. Procurement shifts from model horsepower to claims reliability. Legal demands provenance and audit. Product leads start measuring “trust surface area” the way SREs measure error budgets. In short: the companies that win the acceleration game will be the ones that industrialize honesty, because nothing stalls a faster factory like a recall.

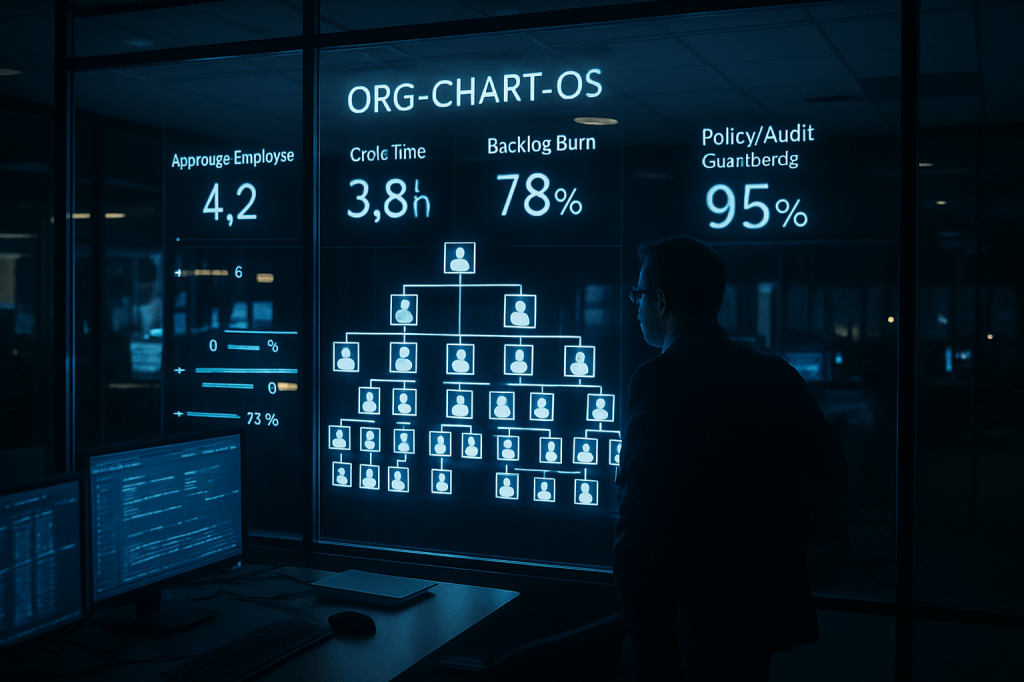

The decade-long sketch: a small crew, a million hands

The long-run picture Huang floated—lean human cores complemented by swarms of software agents—turns the org chart into an operating system. Work becomes a choreography of persistent, specialized processes that never sleep, with humans stepping in for judgment calls, taste, negotiation, and the weird corners of reality where pattern matching still fails. The implications are enormous. Identity and accountability move from “who did this?” to “which agent run produced this, under what policy, with which data?” Middle management evolves into fleet management. Training stops being a classroom and starts looking like CI/CD for skills. And a new kind of labor question appears: not whether algorithms replace us, but how we govern the shadow workforce we can spin up with a YAML file.

What this changes starting now

Expect earnings calls to absorb this framing within a quarter. The tell will be language: less emphasis on headcount optimization, more on cycle time, backlog burn, and the ratio of agents to employees. Inside firms, watch job postings pivot from generic “AI familiarity” to explicit collaboration skills—owning an agent’s roadmap, instrumenting feedback loops, authoring policies that keep automation both productive and truthful. If the data follows, we’ll see throughput rise first, internal mobility second, and only later the slow, careful redistribution of roles that accompanies any platform shift.

The uncomfortable truth in Huang’s optimism is that acceleration is not a free lunch. It buys us more work than we planned for and demands a maturity few companies have: to scale judgment as quickly as they scale generation. But if you accept his premise, the anxiety about replacement gives way to a harder, more adult question: can we build organizations that deserve the speed we’ve just unlocked?